Two Refutations of Evolutionary Beliefs

Intro

Below I refute two core beliefs of evolutionists:

1. that life forms arose from inanimate objects via random processes, and

2. that species can be transformed into other species via random processes,

using statistical and logical analyses, respectively. But first, some background.

Darwinism, the predecessor of today’s larger evolutionary theory, has rocked most societies by replacing their creation stories with hard data:

· there is a fossil record that implies that we’ve been preceded by a lot of life forms that are not documented in any religious text, and

· there is a process called natural selection, which, given an initial existing population, tends to favor members of populations that are well adapted to their environments, contradicting the former vague belief in eternally static life forms.

Fossils clearly exist, and relatively small degrees of natural selection are easily demonstrated both in the lab and in nature. Evidence seems to be on the side of Darwin. But evolutionists have added heavily to Darwin’s observations, and they haven’t limited their beliefs to ideas that are supported by evidence.

The theory of evolution has two components: Darwin’s original natural selection and a newer addition: spontaneous generation. When talking to evolutionists I generally have a strange experience: they talk about natural selection as if it were the only component of evolutionary theory, then they argue that natural selection is indisputably correct because of the ease with which it can be demonstrated, and finally they state that the whole issue of “evolution” is settled. One such person insisted adamantly that evolution is natural selection, and he became enraged at my suggestion that there must be more to it.

Natural selection cannot by itself produce new life forms. In an environment consisting entirely of rocks, air, and water there are only rocks, air, and water from which to select; the only possible outcomes of the selection are the rocks, the air, or the water, or some combination. You cannot select an amino acid from rocks and air and water, since there are no amino acids from which to select; something must construct the amino acid. That “something,” according to evolutionists who will admit that some construction process is necessary, is the random and spontaneous construction of complex objects from simple ones. I refer to this as “spontaneous generation.”

According to Wikipedia, spontaneous generation is a “superseded scientific theory” whose rejection is “no longer controversial among biologists.” According to the article, “It was hypothesized that certain forms, such as fleas, could arise from inanimate matter such as dust, or that maggots could arise from dead flesh.” Well then, I am relieved to learn that biologists have enough statistical sense to reject the idea that something as fantastically complex as a flea might directly appear from a ball of dust.

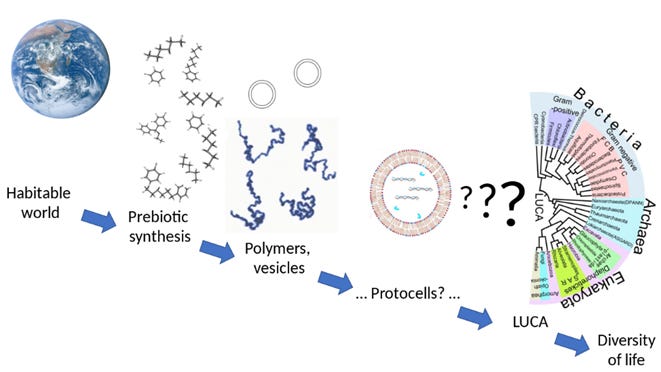

Spontaneous generation has, according to the article, been replaced by abiogenesis. The difference between the two is apparently just a matter of scale. “Abiogenesis” seems a bit less statistically preposterous because it reduces the complexity of spontaneous creations. Here is a diagram from the abiogenesis article:

The first step (the one called “prebiotic synthesis”) is perhaps believable. Simple chemical components of living organisms (such as amino acids) might arise spontaneously by applying some energy (e.g. sunshine, geothermal heat, cosmic rays) to the elements in water and air and rocks, producing compounds that contain a mere couple dozen atoms. But a survey of online literature suggests that even this simple step might not be plausible. There is a common belief that amino acids have been spontaneously created in a lab, but most of the online references discuss the single Miller-Urey experiment, which has apparently been discredited; subsequent studies suggest that its positive result was due to contamination from the experiment’s apparatus. We are left with the question: if the production of the most basic chemical elements of life in a laboratory is so trivial, why is there so little documented evidence of it?

The next step in the diagram involves a fantastic increase in complexity. We are expected to believe that polymer vesicles or something like them somehow existed in a purposeless environment producing the highly purposeful components of “protocells.” This step alone is almost as fantastic as the idea of fleas arising from dust.

To me, spontaneous generation and abiogenesis are the same thing, just on different scales. I prefer the term “spontaneous generation” because it is more meaningful to the average person. My whole purpose here is to discredit this notion.

Statistical Refutation

We’ll give evolutionists the benefit of the doubt by assuming that all twenty of the known amino acids have somehow been formed spontaneously in huge quantities. We also presume the existence of some mechanism which inexplicably and regularly assembles amino acids into chains. The existence of such a mechanism in a purposeless environment constantly assembling seemingly purposeful molecules is even more statistically absurd than the construction of the proteins we’re going to discuss here, but we must start with something.

How long it would take Mother Nature to produce a single protein from such a mechanism operating on randomly available amino acids? Keep in mind that this protein would not even be a living thing, but just a tiny component of living things. As such it would have no survival advantage, so in a hostile environment there would be no reason to expect either it or its predecessors to survive any more than any other molecule.

Let’s start with a simpler analogous problem that produces more reasonable numbers, so that we can get some intuition for this: we will FLIP a coin 10 times in a row and get heads every time. An ATTEMPT is a series of flips that ends in either failure (any "tail") or success (10 heads in a row). How many ATTEMPTS are required before we have a better than 50/50 chance of success?

• The probability of a successful FLIP P(F) = 0.5.

• The probability of succeeding 10 times in a row, i.e. the probability of a successful ATTEMPT P(A) = P(F) 10 = 1/1024.

• The probability of failing during an ATTEMPT P(~A) = 1 - P(A) = 1023/1024.

• The probability of failing every ATTEMPT in a sequence of N ATTEMPTS is P(~A) N.

• We have greater than 50/50 chance of success when the probability of failing all ATTEMPTS is less than 0.5, so: P(~A) N < 0.5.

• Taking the log of both sides: log(P(~A) N) < log(0.5)

• Applying the logarithm exponent rule we get: N * log(P(~A)) < log(0.5)

• Solving for N yields: N > log(0.5) / log(P(~A)) [we flipped the inequality because we divided both sides by a number known to be negative]

• Since ATTEMPTS are integral, we want the minimum integer G where G > min(N).

Substituting the value of P(~A) [i.e. 1023/1024] yields min(N) ≈ 709.436 and G = 710. So 710 ATTEMPTS are required before we have better than 50/50 odds of seeing a single successful sequence of ten heads in a row.

If you understand the above, then you should understand that the same formula applies to protein formation, since proteins are just sequenced amino acids. Using a super high-precision online calculator such as the Keisan Online Calculator, we can compute the kinds of fantastic numbers that the amino acid calculation produces. For formation of a protein of length L:

• The probability of a successful “flip” [i.e. amino selection] P(F) = 0.05, since there are 20 amino acids (there is an assumption here, which is that all 20 are equally available).

• The probability of selecting the next correct amino acid L-times-in-a-row, i.e. the probability of a successful ATTEMPT at producing the whole protein, is P(A) = P(F) L.

• The probability of failing during an ATTEMPT is P(~A) = 1 - P(A).

We’ll dispense with the integer requirement here since the numbers are so large and we’re just trying to get a sense of magnitude. Our formula for computing minimum N for a protein of length L is:

min(N) = log(0.5) / log((1 - 0.05L))

Results:

· The shortest known protein is 20 aminos long. Our formula produces min(N) ≈ 7E+25. That's 70 trillion-trillion ATTEMPTS to likely produce one instance of the shortest protein.

· A typical hemoglobin molecule contains multiple amino chains, the shortest of which contains 141 aminos. Likely producing one such shortest chain requires: min(N) ≈ 2E+183.

You might argue that the simplest life forms do not contain hemoglobin, and you’d be correct. But the implication is that there must be some scaffolding process by which simpler objects construct more complex objects that do construct hemoglobin. This does not get around the fact that some set of processes somewhere must randomly produce a hemoglobin molecule, and not only that, but that set must randomly produce such molecules in huge quantities, coincidentally along with all the other components of a life form that uses hemoglobin.

There are 31,536,000 seconds in a year, or about 3.2E+7. It is estimated that there are 1080 atoms in the universe. If every atom was a protein-forming machine that could randomly assemble one trillion (1012) proteins every second, you'd have 3.2E+99 ATTEMPTS per year. At that rate it would take about 6E+83 years to have a better than 50/50 chance of coming up with one instance of one component chain of one hemoglobin.

The current age of the universe is estimated at 1E+14 years. Compared to our required time to randomly construct just one hemoglobin protein chain under ridiculously optimistic conditions, the universe’s current age is for all practical purposes zero. Under the most absurdly optimal conditions, it seems likely that in a googol years Mother Nature could never randomly create the mechanisms of a single cell from unstructured molecules, but in the evolutionist religion there is no questioning this orthodoxy. It's so utterly mathematically absurd, and yet the entire field of biology will cancel you if you don't subscribe to it.

Logical Refutation

Evolutionists claim that species change into each other incrementally, requiring that each tiny enduring random change provides some survival advantage. They also insist that sequences of small changes leading to major new features would require thousands or millions of years; in fact, that is their passionate defense when explaining how such unlikely and seemingly purposeful changes could occur randomly. These two claims together require that every small change on the way to a major change must provide some substantial new advantage, otherwise natural selection would never select such changes. This requires an unbroken stream of thousands or millions of such small changes ultimately culminating in a major change.

To summarize: every tiny bodily change in a species must provide a substantial long-term survival advantage, even though the obvious final advantage (a formerly flightless species being able to fly, for example) occurs only at the end of the sequence and so is unrelated to the intermediate advantages.

By the insistence of evolutionists, each such advantageous increment would necessarily have been successful for thousands or millions of years while awaiting the next random change, which would strangely provide yet another presumably unassociated advantage while on the way to the final big advantage. The fossil record should therefore be strewn with trillions of such intermediates, providing us with a blueprint for how species incrementally enjoy long-term survival advantages at every step of the way toward becoming a new species even though such advantages are unrelated to the major advantage that occurs only when the sequence is complete.

To illustrate: Suppose we have some creature with no feathers that will someday become a bird. Over the course of millions of years, the creature will need to grow feathers. According to evolutionists, this process can occur only in small statistically believable steps, and very importantly every such small step must provide a substantial survival advantage. So our creature, which formerly presumably had a survival advantage because it was not growing feathers, will now have a new survival advantage, likely for thousands or millions of years, because it is now equipped with feather-stubs. The entire feather-producing process must require at least millions of years, because the incredibly intricate microscopic sub-structure of these feathers along with their gorgeous macro-structure must all develop by pure chance through trial-and-error, and evolutionists themselves propose such a timeline as the rationale for how such improbable events could occur.

Remember that according to our statistical analysis above, the construction of the components of a feather (much less the feather itself) are so improbable that they could never occur even once in countless trillions of universes like ours, much less in numbers large enough to ensure their survival. But let’s not belabor that point here.

The final change that delivers the quantum advantage that the creature will eventually possess due to the development of feathers (i.e. flight) will not occur until some very advanced late stage of feather development. Therefore, every intermediate step of the development must coincidentally provide a substantial other survival advantage (not related to flight), since each such step does not offer the final survival advantage. Ironically it would seem that the growing of non-functional wings and feather stubs, somehow all precisely arranged over the body so as to eventually sustain flights which will not occur until millions of years from now, would actually reduce the survival chances of the intermediate creatures.

I am not claiming that this particular “feather” transition actually occurred; I'm just using a hypothetical example to illustrate the concept. Evolution theory requires that every major transition occurring in every species must necessarily have occurred via a sequence of tiny steps, no step of which provides the final advantage except the final step, all coincidentally providing some enduring other survival advantage on the way to the final advantage.

There's a reason we have little to no evidence of the trillions of intermediaries that must have existed between the major variations within and between all the species. The reason is that it didn't happen that way. You don't need much background in statistics, biology, or chemistry to see this huge logical contradiction - but so few people do, and it's disturbing to think about how many "scientists" can't or won't. You don't need to be a "creationist" to see the logical fallacy. The only religion involved here is the idea that every small step along the way to major change must itself be substantially beneficial for eons while leaving behind no trace.

The Blind Watchmaker Has No Clothes

There seems to be no formal name for the genre of artwork that fools viewers into believing that impossible objects are possible. Most people viewing such images, I imagine, understand that there’s something “wrong” with them. But this does not seem to be the case with invalid analogies and deceptive arguments. Under such influence, it seems that the naïve person can be led to believe just about anything.

In 1986 a biologist named Richard Dawkins published a book entitled, “The Blind Watchmaker,” whose purpose is to convince the reader that the impossible timelines required by purely random processes can be reduced to achievably short durations by iteratively alternating spontaneous generation and natural selection. By culling out “bad” random generators after each mutation, the remaining generators do not explore all random possibilities, nor do they spend much time uselessly reproducing previously failed iterations. By iteratively eliminating “failed” generators we arrive at a refined group that quickly produces a successful complex object.

But Dawkins makes two faulty assumptions:

1. Dawkins’ model requires that complex objects’ survival mechanisms (and therefore their genetic codes) can be gradually approximated. Thus for example the genetic pathway to a creature that has circulatory system that makes use of hemoglobin must involve some gradually successful transition from creatures that do not make use of hemoglobin. The problem is that Dawkins’ gradual-approximation analogy isn’t valid for substantial new creature features, as I’ve already discussed in my Logical Refutation above.

2. In order for failed real-world gene replicators to be culled by natural selection, they must themselves be part of the object they are replicating. In nature, “bad” genes are eliminated due to the non-survival of the cells within which they reside and whose structures they encode. For Dawkins’ model to work, we must start with an object that is already so complex that it contains its own reproduction mechanism, implying that Dawkins’ starting point in the real world is already a living cell. In making this assumption, Dawkins fails to account for the quantum leap from non-living chemicals to the simplest self-replicating organisms.

Dawkins’ Gradual-Approximation Analogy Isn’t Valid

In chapter 3 of Dawkins’ book (“Accumulating small change”), he uses the adage about monkeys with typewriters randomly producing the works of Shakespeare. He illustrates his model of iterating pairs of generation & selection by writing a computer program that seeks to generate a single Shakespearean “target” sentence. The program starts with a random string that is subjected to random mutations of its characters (spontaneous generation). Upon each mutation, the resulting string is compared to the desired Shakespearean string; strings that more closely resemble the final string “survive” and are used as the basis of future mutations, whereas the poorer approximations “die” and cease reproducing (natural selection). He makes his point by demonstrating that the process takes many orders of magnitude less time than just constantly generating random strings. It’s a clever demonstration.

The problem is that in the real world there is no reason to assume that intermediate genomes gradually approximating some future successful genome will necessarily survive. In Dawkins’ program, strings more closely resembling the target string survive because the assumption of the success of gradual approximations is built into the program. There is no reason to expect that real genomes behave in this way. A proto-bird with some recognizable aspects of feathers almost certainly has no more survival advantage than a proto-bird with no feathers; neither can fly. In fact, the proto-bird with partial wings and feathers would presumably suffer a disadvantage by being encumbered with useless baggage. There is neither logic nor evidence supporting the idea that complex objects with substantially different features can transform into each other via a pathway strewn with successful intermediaries. In fact, both statistics and the fossil record suggest the opposite.

Dawkins’ Analogy Does Not Model Real-World Spontaneous Generation

In Dawkins’ analogy, he starts with a character string that is already as long as the target string he seeks to emulate. This string is then manipulated by an external independent agent (his computer program) which successively mutates the string and then decides which mutations survive. But in the purposeless world whose existence Dawkins seeks to validate, there are no independent replicating devices creating genes and then dispensing with the “bad” ones. In the real world, “good” genes survive and “bad” genes disappear because genes and their associated mechanisms are contained within the organisms they encode. Without this, the survival of the organism would have no influence on whether the genetic mutations survive.

Since natural selection selects real genes indirectly by selecting the organisms containing them, Dawkins’ real-world analogy must start with a self-replicating organism at its very first step. How did Mother Nature produce this first self-replicating organism from things that were not self-replicating organisms? To explore this we must look at the smallest possible replicating organisms.

Viruses, including their simplest forms (“single-stranded RNA viruses”), do replicate, but they require host cells. Viruses are parasites and so cannot have formed prior to the more complex hosts upon which they depend. So despite being simple, viruses are not on the pathway of the earliest creatures produced by spontaneous generation.

The simplest known independent replicating organism is apparently a lab-created bacterium called JCVI-syn3.0. According to its documentation, this organism contains 473 genes. So, the simplest self-replicating organism that we’ve managed to create in a lab, which is simpler than the simplest such object found in nature, contains more than three times as many genes (473) as there are amino acids in each of the strands of a hemoglobin molecule (141). According to its creators, 149 of those genes perform unknown functions. A theoretical organism that dispenses with these mysterious genes would still have more than twice as many genes (324) as there are amino acids in each hemoglobin strand. If each of these genes contains only one codon to encode just one amino acid, the genome of the fully understood portion of this theoretical organism would take (essentially) infinitely longer to produce via spontaneous generation than the hemoglobin strand we illustrated in the Statistical Refutation above.

For Dawkins’ analogy to work we must start with a genome that already encodes the structure of its containing cell, which is already surviving and replicating. But the genetic code of the simplest known replicating cell is harder to randomly construct than the hemoglobin molecule that we’ve shown can’t be randomly constructed.

In breezing through Dawkins’ analogy, readers don’t seem to grasp that the real-world analog of its starting point doesn’t exist. Dawkins’ computer program is iterative, but the real world of genetic mutation is recursive. Most readers, including most biologists I presume, have never imagined the concept of recursion, and therefore cannot see the flaw in the analogy.

In the real recursive world, Dawkins requires that we start with a living, replicating cell. Such a “starting” cell would need to have been created over countless eons by independent gene generators that are not contained within living, replicating cells, and which are therefore not subjected to successive applications of natural selection. Real-world primordial gene strands having no standalone survival capacity (like the text strings in Dawkins’ program) cannot dictate the fates of the generators that created them, nor could they be affected by natural selection killing off the proto-organisms (whether such organisms get created or not) that their encodings represent.

Let me restate it another way. Dawkins models the real world using a false analogy in which natural selection operates directly upon genes. In Dawkins’ computer, the “genes” (i.e. text strings) are standalone objects upon which “nature” (i.e. the computer program) operates directly, but the analogous biological genes cannot be standalone objects. Genes outside of reproducing cells do not experience iterative natural selection. Real-world genes exist within already-developed cells that replicate themselves, since natural selection operates directly upon cells, not genes.

In the world predating living objects, the actual analogy to Dawkins’ computer program would be some computer-like object (“CLO”) that manufactures genomes for some unknown reason. Since there are no living cells, these do not yet represent the genomes of living cells. Since the genes cannot self-replicate in the absence of living cells, the CLO must replicate them. The CLO might also mutate them. Since the genes are not contained within cells that experience natural selection, the CLO must subject them to a simulation of natural selection. To do this, the CLO must “know” the eventual target genome(s) in advance, since the CLO’s only standard for survival is the current genome’s similarity to the target. (It seems odd that a CLO that knows the final genome and could therefore create it in one step does not simply do so.) In any event, nothing about this model resembles the randomness in which evolutionists claim to believe.

Dawkins’ computer program is not an analogy to random spontaneous generation. It is at best a simulation of how natural selection limits the possible mutations of already-living organisms. The great hole in evolutionary theory is the question of how unorganized inanimate matter and energy assemble themselves into living organisms. Until this question is answered, the theory of evolution remains an article of faith.

Primordial Soup

In more recent decades it seems that at least some biologists have recognized the need for some kind of primordial machinery that overcomes the utterly fantastic odds against random construction of even the simplest living cell. In a paper called “The RNA World”, the author implicitly acknowledges that the automatic protein-forming machinery imagined by Miller & Urey is already so complex that some even more amazing process must have preceded it, so he postulates that some mechanism that propagates self-sufficient RNA once existed. In this world, RNA replicates itself, combines with other RNA (producing variation), survives outside the confines of a living cell, and thereby experiences natural selection. His hypothesis arises from evidence that such RNA replication has been observed as an adjunct to the mechanics of reproduction in living cells. But if such processes have been observed outside of living cells, he seems not to have mentioned it.

In a paper called “The origin of life: what we know, what we can know and what we will never know”, the authors discuss this idea in more depth and they talk about “dynamic kinetic stability.” Here the authors at least lightly discuss the general concepts of physics that seem to defy the existence of such systems. This is encouraging. They’re thinking less about already-existing living cells and more about the harsh world of physics and statistics, wherein any realistic discussions of life’s origins must take place.

But at this point it’s all still hypothesis, because no standalone gene replication systems seem to have survived into the modern world. This in itself seems a staggering contradiction: the original machinery that was robust enough to overcome the impossible odds against forming the first living cell was so inherently weak that apparently no trace of it remains today.

Summary

The theory of evolution is sold as a package. From our earliest ages we are told:

· There’s a fossil record that disputes whatever creation myths you learned elsewhere.

· Natural selection refines gene pools of living organisms by allowing only the fittest to reproduce.

These two statements are hard to dispute, so they lend credibility to whoever says them. Those same folks then say:

· Random processes caused life to arise from nonliving matter.

· Random mutation and natural selection are responsible for the diversity of life; i.e. we get new species by mutating and selecting them from other species.

My purpose is to argue that both of these statements are absurd – the first due to statistical impossibility and the second due to logical contradiction.

During the early nineteenth century, a French historian named Alexis de Tocqueville visited the USA to see what all the “democracy” fuss was about. In his report, which he entitled “Democracy In America”, in a chapter that he called “Unlimited Power Of Majority, And Its Consequences” he said:

The French, under the old monarchy, held it for a maxim that the King could do no wrong; and if he did do wrong, the blame was imputed to his advisers. This notion was highly favorable to habits of obedience, and it enabled the subject to complain of the law without ceasing to love and honor the lawgiver. The Americans entertain the same opinion with respect to the majority.

De Tocqueville’s point was that Americans had not really abolished the monarchy; rather they had replaced their old monarch with a new one, namely the “majority.” Majority rule is so ingrained in our culture that many regard it as a “given” that political policies must necessarily be correct if the majority has voted for them. As with a king, in a democracy the majority is the infallible adjudicator.

Like Americans replacing old monarchs with essentially a new monarch, evolutionists did not just abolish the old creationist gods; rather they replaced them with a new god: Random. Evolutionists insist that everything must arise randomly. Their creed, were they to write it down, might look something like this:

In the beginning Random created the heavens and the earth. And Random caused light to exist. And Random said, “Let the light randomly interact with the earth and the waters to create life,” and it was so. And Random said, “Let the waters randomly bring forth swarms of living creatures.”

And so on and so forth, and then:

On the Sabbath day, which is every day, Random said, “Let no man question whether anything could ever have arisen from anything other than Random. Thou shalt have no other gods before me.”

Of course, evolutionists would never write their creed so explicitly; in so doing they would make clear the fact that it is a creed. In a world now steadfastly dedicated to “science”, that would have a bad look.

Evolutionists have two things going for them:

1. most people have little concept of statistics, and

2. being very short-lived creatures, people regard the estimated age of our universe as a really long time.

Combine these and we have a formula for convincing the masses that by now literally anything could have happened.

There’s no quick fix for #1, but you can immunize yourself against #2 by reading a fascinating book called The Five Ages Of The Universe (subtitle “Inside the Physics of Eternity”) that lays out physicists’ recent theories of the progression of our universe through five structurally different eras spanning a googol years. If nothing else, it will radically expand your conception of time. Of course, physicists have their own “spontaneous generation” dogma (the “Big Bang”) and that book subscribes to it, but that’s a whole other topic.

Background Noise

I am a computer programmer, not a biologist. You may say that I am therefore not qualified to comment on biological matters, and usually you’d be right. However, it is also possible that a person outside the field might occasionally be best qualified to upend a belief system held almost universally by almost everyone in the field.

As a programmer I am mathematically predisposed, particularly to information theory and to statistics. A biologist might think about an RNA base pair as merely a pair of nucleotides, i.e. two chemicals - but the apparent purpose of base pairs is to encode information, so I instead jump to information-theoretical questions, like, “How many bits of information are encoded by a base pair?” and, “What are the odds of the occurrence of a particular sequence of base pairs?” This predisposition is the basis of my little paper here.

Evolutionary theory is famous for upending traditional religious beliefs, so let me say that I have no religious motivation in criticizing evolution other than to attack the quasi-religious aspects of the theory itself, so I ask that readers refrain from insisting that my arguments constitute an endorsement of any other explanation, religious or otherwise. There is no requirement that humanity have an explanation for everything. My answer to the question of how living things got here is, “I have no idea.”

Let me also emphatically say that I do not dispute the validity of the fossil record or of demonstrable aspects of natural selection, which together constitute half of evolutionary theory. My dispute is with the “spontaneous generation” part.

Actually, my larger dispute is with the notion of indoctrinating the masses with the idea that theory is fact. “We have a new paradigm, you see, that nicely explains some things, and which therefore must be presumed to explain everything.” This dogma has afflicted us for centuries in the political world, where newly imagined government systems are presumed to be the solution to humanity’s problems of poverty, inequity, and so forth. “Our new system, you see, will solve all of this; all we need is to indoctrinate a new generation and wait for the old generation to die out. Then, finally, humanity will achieve Nirvana.” We’ve tried that, and it’s been a disaster.

Finally, I am a big fan of education. If readers wish to educate me by pointing out flaws in my logic or my computations, especially by referring me to rational literature on the subject, I’m more than happy to change my opinion.